July 8, 2014

July 8, 2014

July 3, 2014

simon - 2 -- [4:15]

simon - 3 -- [6:52]

simon - 4 -- [2:39]

simon - 5 -- [4:13]

simon - 6 -- [3:28]

simon - 7 -- [3:00]

simon - 8 -- [2:46]

simon - 9 -- [7:52]

simon - 10 -- [3:03]

simon - 11 -- [4:58]

July 2, 2014

The above performance gain aside, I am still not satisfied with the bitmap drawing performance on recent OSX versions, which has led me to benchmark SWELL's blitting code. My test uses the LICE test application, with a screen full of lines, an opaque NSView, and 720x500 resolution.

OSX 10.6 vs 10.8 on a C2D iMac

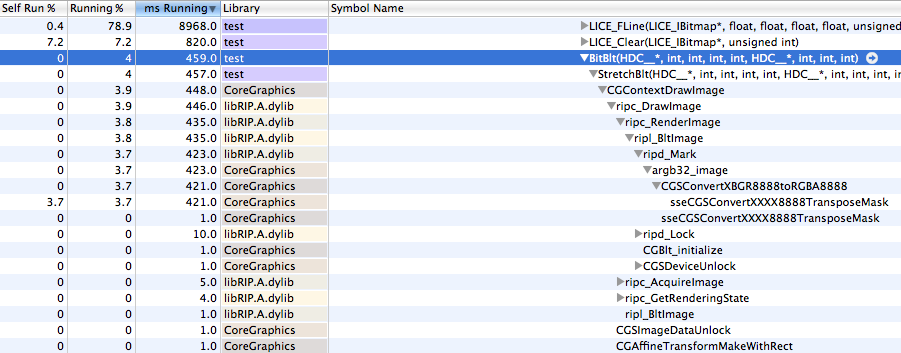

My (C2D 2.93GHz) iMac running 10.6 easily runs the benchmark at close to 60 FPS, using about 45% of one core, with the BitBlt() call typically taking 1ms for each frame.

Here is a profile -- note that CGContextDrawImage() accounts for a modest 3.9% of the total CPU use:

It might be possible to reduce the work required by changing our bitmap representation from ABGR to RGBA (avoiding sseCGSConvertXXXX8888TransposeMask and performing a memcpy() instead), but in my opinion 1ms for a good sized blit (and less than 4% of total CPU time for this demo) is totally acceptable.

I then rebooted the C2D iMac into OSX 10.8 (Mountain Lion) for a similar test.

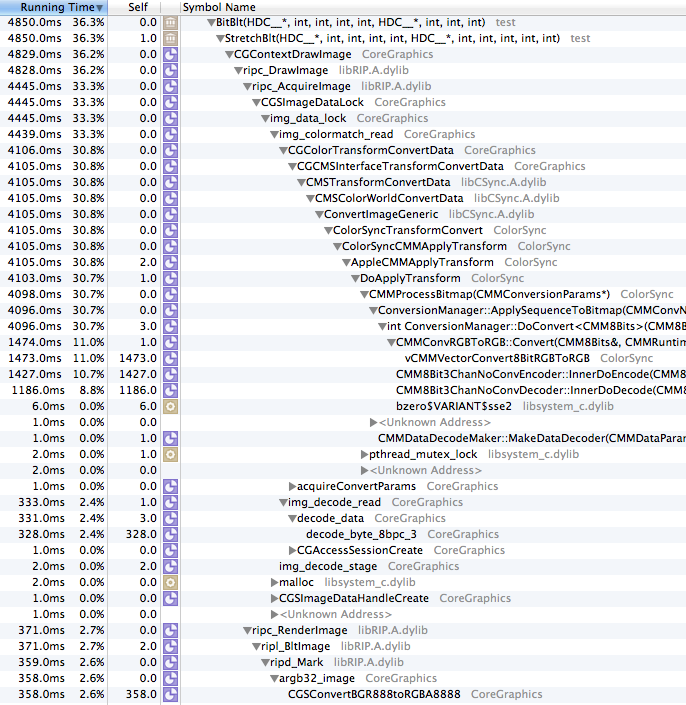

Running the same benchmark on the same hardware in Mountain Lion, we see that each call to BitBlt() takes over 6ms, the application struggles to exceed 57 FPS, and the CPU usage is much higher, at about 73% of a core.

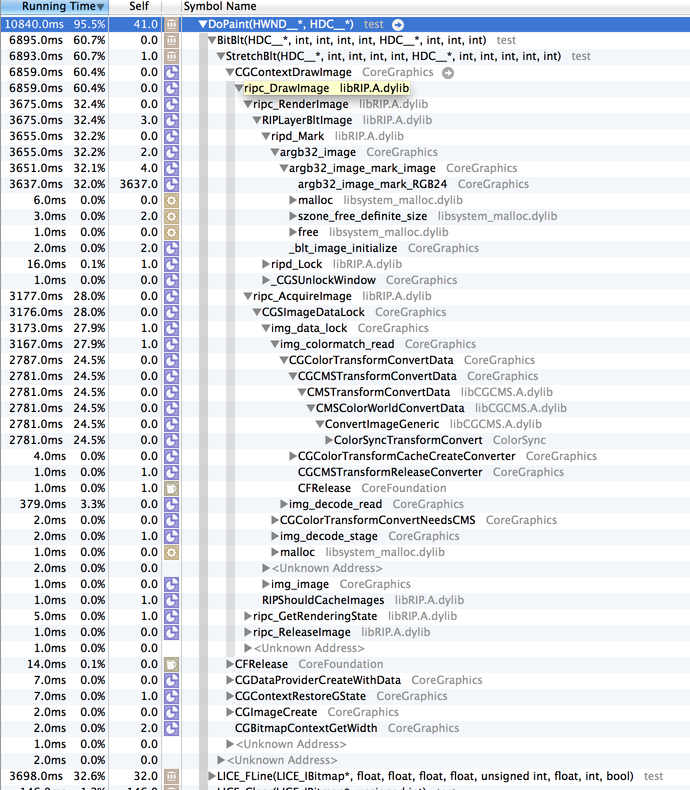

Here is the time sampling of the CGContextDrawImage() -- in this case it accounts for 36% of the total CPU use!

Looking at the difference between these functions, it is obvious where most of the additional processing takes place -- within img_colormatch_read and CGColorTransformConvertData, where it apparently applies color matching transformations.

I'm happy that Apple cares about color matching, but to force it on (without allowing developers control over it) is wasteful. I'd much rather have the ability transform the colors before rendering, and be able to quickly blit to screen, than to have to have every single pixel pushed to the screen color transformed. There may be some magical way to pass the right colorspace value to CGCreateImage() to bypass this, but I have not found it yet (and I have spent a great deal of time looking, and trying things like querying the monitor's colorspace).

That's what OpenGL is for!

But wait, you say -- the preferred way to quickly draw to screen is OpenGL.

Updating a complex project to use OpenGL would be a lot of work, but for this test project I did implement a very naive OpenGL blit, which enabled an OpenGL context for the view and created a texture for drawing each frame, more or less like:

glDisable(GL_TEXTURE_2D);

glEnable(GL_TEXTURE_RECTANGLE_EXT);

GLuint texid=0;

glGenTextures(1, &texid);

glBindTexture(GL_TEXTURE_RECTANGLE_EXT, texid);

glPixelStorei(GL_UNPACK_ROW_LENGTH, sw);

glTexParameteri(GL_TEXTURE_RECTANGLE_EXT, GL_TEXTURE_MIN_FILTER, GL_LINEAR);

glTexImage2D(GL_TEXTURE_RECTANGLE_EXT,0,GL_RGBA8,w,h,0,GL_BGRA,GL_UNSIGNED_INT_8_8_8_8, p);

glBegin(GL_QUADS);

glTexCoord2f(0.0f, 0.0f);

glVertex2f(-1,1);

glTexCoord2f(0.0f, h);

glVertex2f(-1,-1);

glTexCoord2f(w,h);

glVertex2f(1,-1);

glTexCoord2f(w, 0.0f);

glVertex2f(1,1);

glEnd();

glDeleteTextures(1,&texid);

glFlush();

This resulted in better performance on OSX 10.8, each BitBlt() taking about 3ms, framerate increasing to 58, and the CPU use going down to about 50% of a core. It's an improvement over CoreGraphics, but still not as fast as CoreGraphics on 10.6.

The memory use when using OpenGL blitting increased by about 10MB, which may not sound like much, but if you are drawing to many views, the RAM use would potentially increase with each view.

I also tested the OpenGL implementation on 10.6, but it was significantly slower than CoreGraphics: 3ms per frame, nearly 60 FPS but CPU use was 60% of a core, so if you do ever implement OpenGL blitting, you will probably want to disable it for 10.6 and earlier.

Core 2 Duo?! That's ancient, get a new computer!

After testing on the C2D, I moved back to my modern quad-core i7 Retina Macbook Pro running 10.9 (Mavericks) and did some similar tests.

- Normal: 12-14ms per frame, 46 FPS, 70% of a core CPU use

- Normal, in "Low Resolution" mode: 6-7ms per frame, 58FPS, 60% of a core CPU use

- Normal, without the kCGInterpolationNone: 29ms per frame, 29 FPS, 70% of a core CPU use

- Normal, in "Low Resolution" mode, without kCGInterpolationNone: same as with kCGInterpolationNone.

- GL: 1-2ms per frame, 57 FPS, 37% of a core CPU

- GL, in "Low Resolution" mode: 1-2ms per frame, 57 FPS, 40% of a core CPU

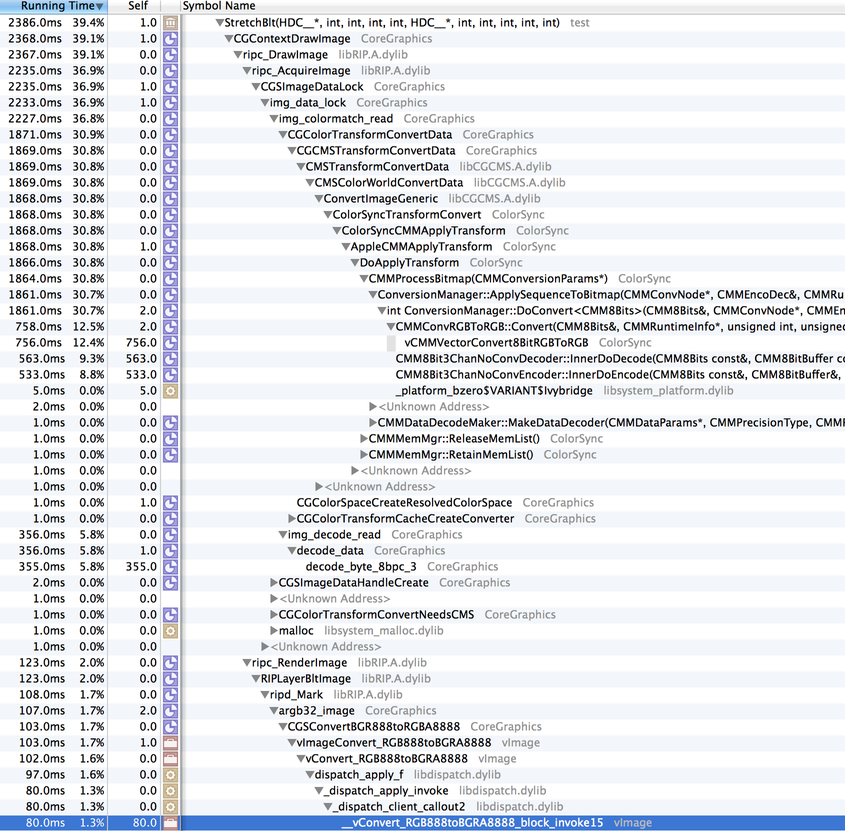

Let's see where the time is spent in the "Normal, Low Resolution" mode:

This looks very similar to the 10.8, non-retina rendering, though some function names have changed. There is the familiar img_colormatch_read/CGColorTransformConvertData call which is eating a good chunk of CPU. The ripc_RenderImage/ripd_Mark/argb32_image stack is similar to 10.8, and reasonable in CPU cycles consumed.

Looking at the Low Resolution mode, it really does behave similar to that of 10.8 (though it's depressing to see that it still takes as long to run on an i7 as 10.8 did on a C2D, hmm). Let's look at the full-resolution Retina mode:

img_colormatch_read is present once again, but what's new is that ripc_RenderImage/ripd_Mark/argb32_image have a new implementation, calling argb32_image_mark_RGB24 -- and argb32_image_mark_RGB24 is a beast! It uses more CPU than just about anything else. What is going on there?

Conclusions

If you ever feel as if modern OSX versions have gotten slower when it comes to updating the screen, you would be right. The basic method of drawing ixels rendered in a platform-independent fashion to screen has gotten significantly slower since Snow Leopard, most likely in the name of color-accuracy. In my opinion this is an oversight on Apple's part, and they should extend the CoreGraphics APIs to allow manual application of color correction.

Additionally, I'm suspicious that something odd is going on within the function argb32_image_mark_RGB24, which appears to only be used on Retina displays, and that the performance of that function should be evaluated. Improving the efficiency of that function would have a positive impact on the performance of many third party applications (including REAPER).

If anybody has an interest in duplicating these results or doing further testing, I have pushed the updates to the LICE test application to our WDL git repository (see WDL/lice/test/).

Update: July 3, 2014

After some more work, I've managed to get the CPU use down to a respectable level in non-Retina mode (10.8 on the iMac, 10.9/Low Resolution on the Retina MBP), by using the system monitor's colorspace:

CMProfileRef systemMonitorProfile = NULL;

CMError getProfileErr = CMGetSystemProfile(&systemMonitorProfile);

if(noErr == getProfileErr)

{

cs = CGColorSpaceCreateWithPlatformColorSpace(systemMonitorProfile);

CMCloseProfile(systemMonitorProfile);

}

Using this colorspace with CGContextCreateImage prevents CGContextDrawImage from calling img_colormatch_read/CGColorTransformConvertData/etc. On the C2D 10.8, it gets it down to 1-2ms per frame, which is reasonable.However, this mode is appears to be slower on the Retina MBP in high resolution mode, as it calls argb32_image_mark_RGB32 instead of argb32_image_mark_RGB24 (presumably operating on my buffer directly rather than the intermediate colorspace-converted buffer), which is even slower.

Update: July 3, 2014, later

OK, if you provide a bitmap that is twice the size of the drawing rect, you can avoid argb32_image_mark_RGBXX, and get the Retina display to update in about 5-7ms, which is a good improvement (but by no means impressive, given how powerful this machine is). I made a very simple software scaler (that turns each pixel into 4), and it uses very little CPU. So this is acceptable as a workaround (though Apple should really optimize their implementation). We're at least around 6ms, which is way better than 12-14ms (or 29ms which is where we were last week!), but there's no reason this can't be faster. Update (2017): the mentioned method was only "faster" because it triggered multiprocessing, see this new post for more information.

As a nice side effect, I'm adding SWELL_IsRetinaDC(), so we can start making some things Retina aware -- JSFX GUIs would be a good place to start...

5 Comments

March 13, 2014

December 18, 2013

December 14, 2013

- OSX version

- Can load multiple scripts, which run independently but can share hardware

- Better string syntax (normal quotes rather than silly {} etc), user strings identified by values 0..1023

- Better string manipulation APIs (sprintf(), strcpy(), match(), etc).

- match() and oscmatch(), which can be used for simple regular expressions with the ability to extract values

- Ability to detect stale devices and reopen them

- Scripts can output text to the newly resizeable console, including basic terminal emualtion (\r, and \33[2J for clear)

- Vastly improved icon

The thing I'm most excited about, in this, is the creation of eel_strings.h, which is a framework for extending EEL2 (the scripting engine that powers JSFX, for one) to add string support. Adding support for strings to JSFX will be pretty straightforward, so we'll likely be doing that in the next few weeks. Fun stuff. Very few things are as satisfying as making fun programming languages to use...

8 Comments

November 15, 2013

November 14, 2013

Here's another project, this one took less than 24 hours to make. It's a little win32 program called "midi2osc" (license: GPL, binary included, code requires WDL to compile), and it simply listens to any number of MIDI devices, and broadcasts to any number of destinations via OSC-over-UDP.

MIDI and OSC use completely different types of encoding -- MIDI consists of 1-3 byte sequences (excluding sysex), and OSC is encoded as a string and any number of values (strings, floats, integers, whatever). It would be very easy to make a simplistic conversion of every MIDI event, such as 90 53 7f being converted to "/note/on/53" with an integer value of 7f, and so on. This would be useful, but also might be somewhat limited.

In order to make this as useful as possible, I made it use EEL2 to enable user scripting of events. EEL2 is a fast scripting engine designed for floating point computation, that was originally developed as part of Nullsoft AVS, and evolved as part of our Jesusonic/JSFX code. EEL2 compiles extremely quickly to native code, and can have context that is used by code running in multiple threads simultaneously.

For this project the EEL2 syntax was extended slightly, via the use of a preprocessor, so that you can specify format strings for OSC. For example, you can tell REAPER to set a track's volume via:

oscfmt0 = trackindex;

oscsend(destination, { "/track/%.0f/volume" }, 0.5);

Internally, { xyz } is stored to a string table and inserted as a magic number which refers to that string table entry. It is cheap, but it works.

Other than that pretty much everything else was a matter of copying and pasting some tedious bits (win32 MIDI device input, OSC message construction and bundling) and writing small bits of glue.

Since writing this, I've found myself fixing a lot of small OSC issues in REAPER. I always tell people how using the thing you make is very important -- I should update that to include the necessity of having good test environments (plural).

Why did I make this? My Zoom R24. This is a great device, but the Windows 7/64 driver has some issues. Particularly:

- If the MIDI input device of the R24 is not opened, audio playback is not reliable. This includes when listening to Winamp or watching YouTube. So basically, for this thing to be useful, I need something to keep hitting the MIDI device constantly. So for you Zoom R24 win64 users who have this problem, midi2osc might be able to fix your problems.

- If REAPER crashes or is otherwise debugged with the MIDI device open, the process hangs and it's a pain to continue. Moving the MIDI control to a separate process that can run in the system tray = win.

- (not midi2osc related): I wish the drum pads would send MIDI too... *ahem*

// @input lines:

// usage: @input devicenameforcode "substring match" [skip]

// can use any number of inputs. devicenameforcode must be unique, if you specify multiple @input lines

// with common devicenameforcode, it will use the first successful line and ignore subsequent lines with that name

// you can use any number of devices, too

@input r24 "ZOOM R"

// @output lines

// usage: @output devicenameforcode "127.0.0.1:8000" [maxpacketsize] [sleepamt]

// maxpacketsize is 1024 by default, can lower or raise depending on network considerations

// sleepamt is 10 by default, sleeps for this many milliseconds after each packet. can be 0 for no sleep.

@output localhost "127.0.0.1:8000"

@init

// called at init-time

destdevice = localhost; // can also be -1 for broadcast

// 0= simplistic /track/x/volume, /master/volume

// 1= /r24/rawfaderXX (00-09)

// 2= /action/XY/cc/soft (tracks 1-8), master goes to /r24/rawfader09

fader_mode=2;

@timer

// called around 100Hz, after each block of @msg

@msg

// special variables:

// time (seconds)

// msg1, msg2, msg3 (midi message bytes)

// msgdev == r24 // can check which device, if we care

(msg1&0xf0) == 0xe0 ? (

// using this to learn for monitoring fx, rather than master track

fader_mode > 0 ? (

fmtstr = { f/r24/rawfader%02.0f }; // raw fader

oscfmt0 = (msg1&0xf)+1;

fader_mode > 1 && oscfmt0 != 9 ? (

fmtstr = { f/action/%.0f/cc/soft }; // this is soft-takeover, track 01-08 volume

oscfmt0 = ((oscfmt0-1) * 8) + 20;

);

val=(msg2 + (msg3*128))/16383;

val=val^0.75;

oscsend(destdevice,fmtstr,val);

) : (

fmtstr = (msg1&0xf) == 8 ? { f/master/volume } : { "f/track/%.0f/volume"};

oscfmt0 = (msg1&0xf)+1;

oscsend(destdevice,fmtstr,(msg2 + (msg3*128))/16383);

);

);

msg1 == 0x90 ? (

msg2 == 0x5b ? oscsend(destdevice, { b/rewind }, msg3>64);

msg2 == 0x5c ? oscsend(destdevice, { b/forward }, msg3>64);

msg3>64 ? (

oscfmt0 = (msg2&7) + 1;

msg2 < 8 ? oscsend(destdevice, { t/track/%.0f/recarm/toggle }, 0) :

msg2 < 16 ? oscsend(destdevice, { t/track/%.0f/solo/toggle }, 0) :

msg2 < 24 ? oscsend(destdevice, { t/track/%.0f/mute/toggle }, 0) :

(

msg2 == 0x5e ? oscsend(destdevice, { b/play }, 1);

msg2 == 0x5d ? oscsend(destdevice, { b/stop }, 1);

msg2 == 0x5f ? oscsend(destdevice, { b/record }, 1);

)

);

);

msg1 == 0xb0 ? (

msg2 == 0x3c ? (

oscsend(destdevice, { f/action/992/cc/relative }, ((msg3&0x40) ? -1 : 1));

);

);

The 9th fader sends "/r24/rawfader09" because I have that OSC string mapped (with soft-takeover) to a volume plug-in in my monitoring FX chain.

6 Comments

October 21, 2013

October 19, 2013

Here are a couple of test projects (which include the mod/xm as well as the converted files): spathi.mod (from SC2), and resistance_is_futile.xm.

In case there's any question, it turns out the tracker format is incredibly efficient for both editing and playback... compared to a DAW.

3 Comments

October 15, 2013

ezequiel - 2 -- [5:13]

ezequiel - 3 -- [5:15]

ezequiel - 4 -- [11:13]

ezequiel - 5 -- [3:31]

ezequiel - 6 -- [5:06]

ezequiel - 7 -- [7:24]

ezequiel - 8 -- [12:08]

ezequiel - 9 -- [9:11]

ezequiel - 10 -- [21:54]

ezequiel - 11 -- [4:06]

ezequiel - 12 -- [3:37]

October 7, 2013

July 19, 2013

July 18, 2013

Recordings:

kawha

7 Comments

July 3, 2013

June 27, 2013

June 17, 2013

Some initial comments on the data:

- The average speed is calculated based on the total mileage counter, trip count, and average trip duration. After the first few days (whose data is a bit all over the place), it settles in at about 7.4-7.5 MPH. Do we think that their distance calculation is A) as the bird flies, B) bird-distance * cityadjustmentfactor, or C) they route most likely route in google maps? Probably safe to rule out C, and given the top speed of these things is probably around 15MPH, I'd say it's probably B (some 1.3x cityadjustmentfactor, or something).

- If we assume that the last 5 days worth of data on day and week passes hold steady (I know, unlikely, as factors such as season, initial buzz, conversion to annual members, and so on will affect things greatly), these will bring in $9.3 million and $2.2 million per year respectively.

- If annual members top out at 80,000 (a little under twice what it is now, I would say this is very conservative), that'll be around $7.6 million per year.

- It becomes apparent why they are called "Citibike" and also have Mastercard logos on them (as they ponied up something like $41 million and $6 million respectively -- I wonder if this is one time or per year).

- Hopefully annual membership will continue to grow well past 80,000, though, and presumably there will be extra revenue from late fees...

- I wonder what their operating budget is...

- OK discussions of money are sort of a bummer (not because of whether this is feasible or not, but just because talking about money makes me uncomfortable): the average trip length of 2-3 miles is pleasing.. 2 miles in a long way in Manhattan, glad to see people are making good use of the bikes!

If anybody makes any interesting graphs or correlations or extrapolations with that data, post 'em (or links) in the comments...

5 Comments

April 4, 2013

March 27, 2013

2 Comments